Priya Dutta, Assessment Manager, introduces two common approaches to assessment.

At AlphaPlus, we work closely with clients to build the most suitable assessment programmes for their needs. It is useful, therefore, for everyone to have a general understanding of two very common approaches.

What is a criterion-referenced test?

A criterion-referenced test aims to measure a candidate’s performance against a set of pre-defined assessment criteria. The assessment criteria are carefully established by the examining body or organisation tasked with upholding professional standards. Examination passing standards will be determined using a range of statistical standards setting techniques and professional expertise to create the criteria which candidates will need to meet. The candidate’s performance in the assessment will be measured using the assessment criteria to see if the passing standard is met. This can be as simple as a ‘pass’ or a ‘fail’, but could be a specific grade, and, if used as a diagnostic test as part of a programme of work, it may also involve describing how well a series of statements about the criteria have been met.

What are the benefits of using criterion referencing?

Criterion referencing is especially useful in high-stakes assessments such as professional competency exams where public safety is crucial. Such assessments will often include a series of criteria that are essential to provide a pass. These criteria may be referred to as ‘hurdles’ or ‘red flags’. Failure to demonstrate competence in these areas may be so serious as to warrant an immediate fail. Criterion-referenced testing is also very beneficial when assessing for learning. Used judiciously as part of the continuous teaching/learning process, criterion referencing helps learners to see how and where they need to supplement their learning to meet the criteria. This continuous reflection on performance results in a more active learning style.

Norm-referenced tests

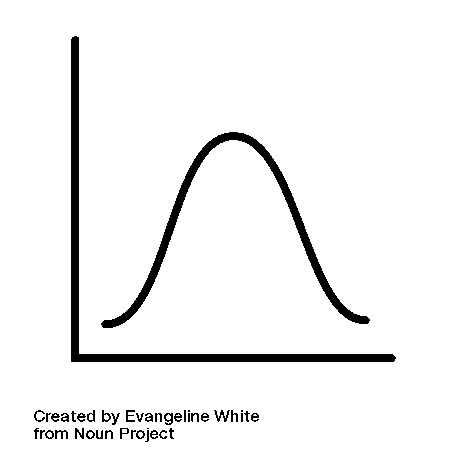

Norm-referenced tests are usually designed to compare and rank test takers in relation to one another. Norm-referenced tests establish whether candidates performed better or worse than the ‘average’ candidate. This is usually done through trialling items with a representative sample of the cohort[i]. This means that scores can be compared against the performance results of the trial group of to plot a distribution graph of results. A well-calibrated test will have a spread of marks similar to a graph of normal distribution (see diagram below), where the majority of test takers’ marks fall around the mean, but with significant numbers of candidates at the upper and lower ends of mark allocation. If the distribution graph is skewed too far to the left or right, this signals that there may be problems with the test design as it is not differentiating well between different learners.

What are the benefits of using norm referencing?

There are instances where ordering a candidate’s performance against their peers is useful. At school level, norm referencing is often used internally by teachers to assess whether learners are doing well for their age against a national standard. Such tests are especially useful to ensure that learners on each side of the ‘bell curve’ are receiving the teaching they need to either bridge the gap or to challenge them to go further. They are less useful, however, in the world of work, where it is necessary to show mastery of a set of defined criteria to demonstrate professional competency. It would be a source of much public consternation if top grades were awarded in a medical proficiency exam, even if the highest scorers failed to answer correctly basic questions about patient safety!

There has been much discussion in recent times about the relative merits and disadvantages of these two assessment types. This is where assessment design is absolutely vital; the process of establishing the purpose of the assessment, alongside the knowledge domain to be assessed and desired outcomes for candidates will give a good indication as to which type of assessment is most suitable.

[i] typically, of the same age or competency level as the sample sitting the live test.

AlphaPlus

AlphaPlus